Apple Vision Pro

Apple Vision Pro was introduced during WWDC 2023 as a preview of the future for Apple. It is a mixed-reality headset with augmented reality and virtual reality applications. The headset won't be available for purchase until early 2024 and will cost $3,499.

● AR/VR modes selected with a Digital Crown

● Portable with a 2-hour battery attachment

● Accessory ecosystem for straps and Light Seal

● More than 4K per eye

● M2 and R1 processors

● Launching in early 2024 for $3,499

Page last updated:

Get Apple News Directly in Your Inbox

Apple has been rumored to be working on some kind of Apple VR headset for years, and it's finally here. Though the Apple Vision Pro isn't quite VR, instead, it is mixed reality with AR and VR applications.

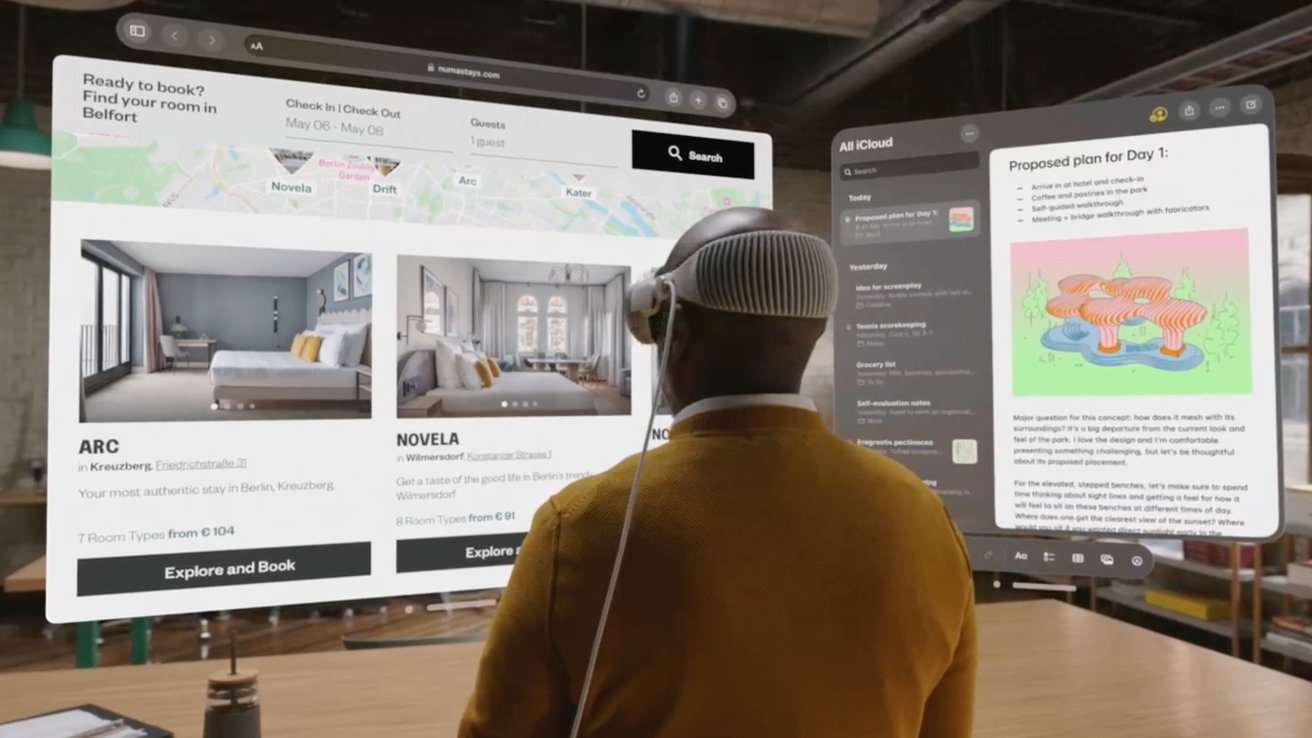

The headset and its operating system visionOS are referred to by Apple as spatial computing. They blend software with the world around the user via video passthrough.

Apple Vision Pro is a headset that obscures the user's vision, not a pair of goggles or glasses. But high-quality cameras pass 3D video of the environment to the user through a pair of pixel-dense displays.

It is seen as the first step into an entirely new kind of computing that could lead to the long-rumored Apple Glass — AR glasses. Even this headset, with its "pro" moniker, is likely just a stepping stone to a more budget-friendly Apple Vision model down the line.

Apple Vision Pro design

The ski goggle-like design that's been rumored since early 2021 ended up being the final product. A curved piece of glass acts as a lens for the multiple cameras, while the exterior case is aluminum.

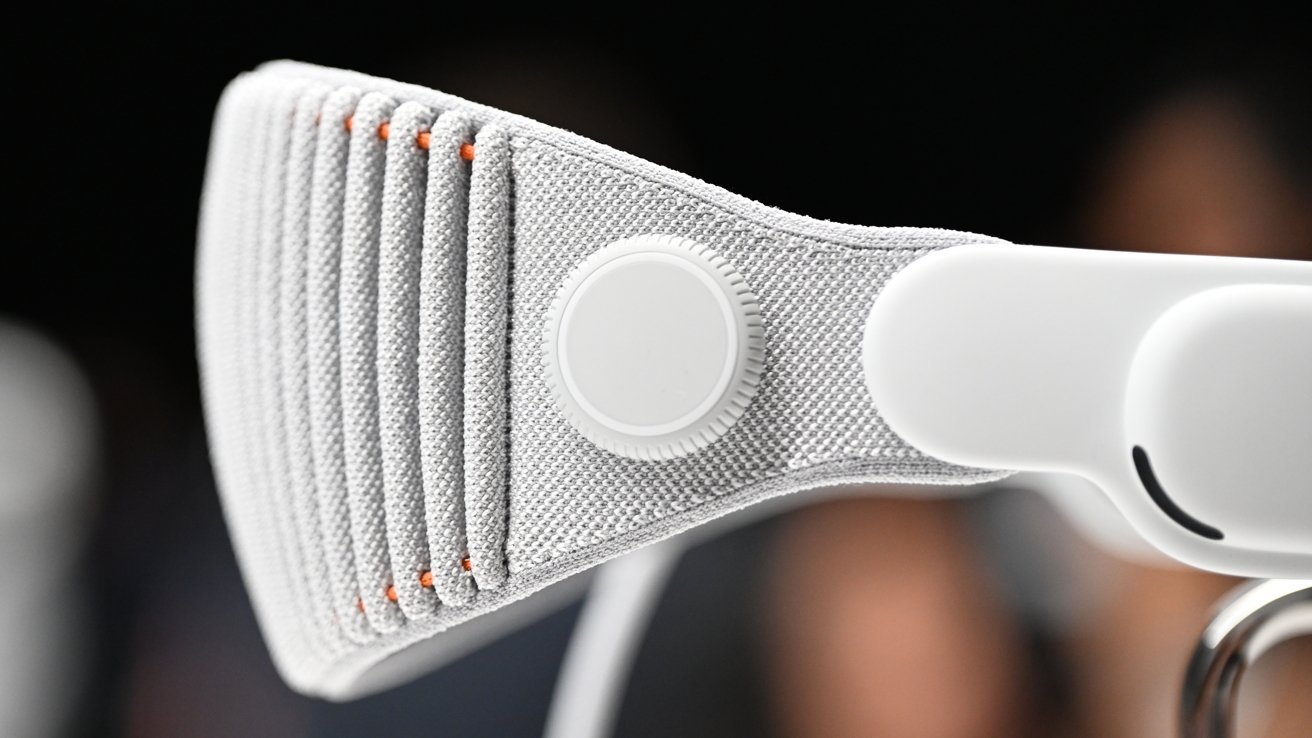

The headband is 3D-knitted as a single piece, ribbed, and attached using a mechanism to switch them out when required. Providing cushioning and stretch, it also has a Fit Dial, so users can change how it fits to their particular head size and shape.

The headset is so form-fitting that users can't wear glasses like other headsets. A partnership with Zeiss allows for vision-correction lenses to be used, enabling glasses wearers to enjoy the experience.

The sides of Apple Vision Pro house small speakers that point spatial audio into the user's ears. The top has a button for controlling certain features like the 3D camera, and the Digital Crown on the right controls AR/VR immersion.

A power cable attaches to the side. It can either connect to an outlet for all-day use or a battery pack for 2 hours of portable use.

It appears that the battery pack is always connected and passes power through to the headset from an outlet via an integrated USB-C port. If the battery dies, the entire headset shuts down, as no backup power is available.

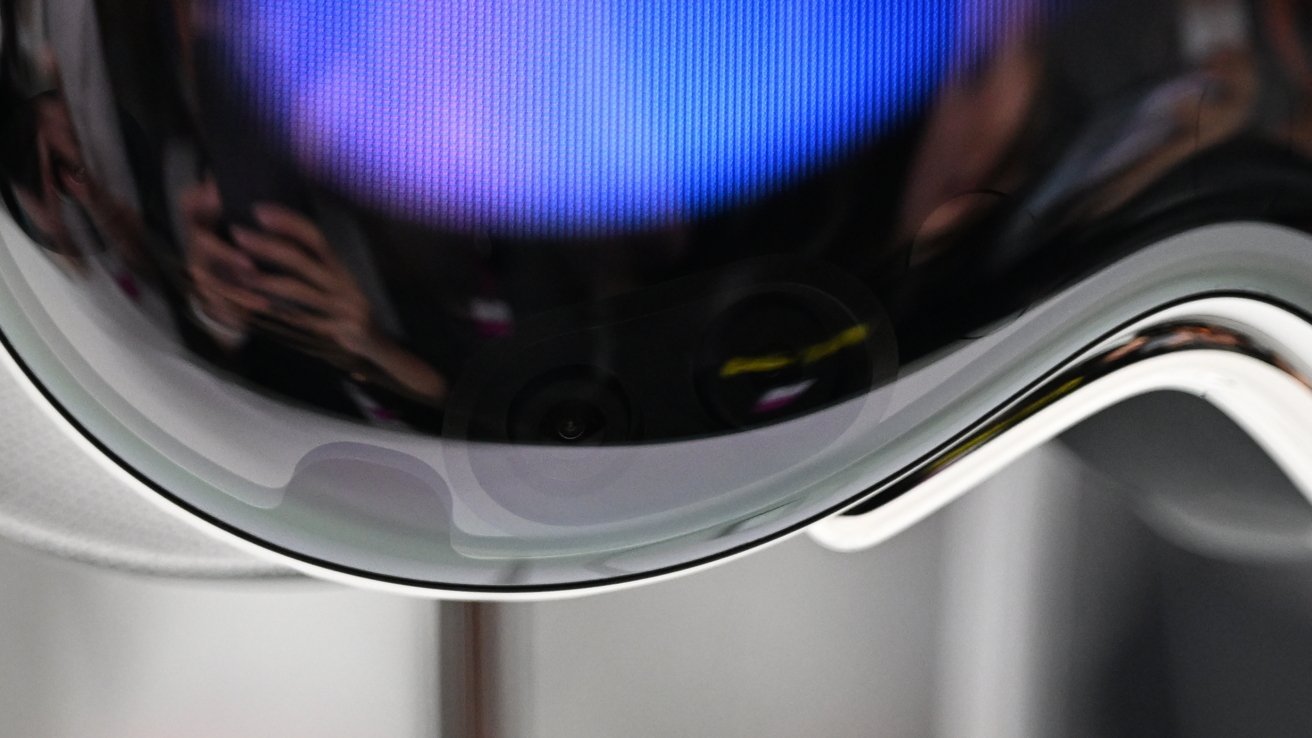

The front glass is also a display for a feature called eyeSight. It enables users to indicate if they are paying attention or not via an admittedly odd effect.

When users are immersed in the Apple VR mode with no view of the outside world, the glass shows a colorful waveform. When the user is working in AR, it shows a representation of the user's eyes with a parallax effect.

This was designed to ensure that users could feel confident in using the headset in any environment. However, it seems some social hurdles will have to be overcome before such technology is commonplace during social interactions.

Apple Vision Pro technology

What Apple revealed during WWDC in June was just a preview, so it was hazy on details. Exact specs like display resolution and refresh rate haven't been shared in a spec sheet, but some data has been learned through developer sessions.

Apple's headset is a standalone device running on an M2 processor and R1 co-processor. The dual-chip design enables spatial experiences — M2 processing visionOS and graphics, while R1 is dedicated to processing camera input, sensors, and microphones.

There is a 12-millisecond lag from camera to display, which users shouldn't be able to notice. Those that have worn the headset say that looking through the display feels like looking into the real world.

An eye-tracking system of LEDs and infrared cameras enables precise controls just by looking. Users can glance at a text input field and then speak to fill it in.

There are 12 cameras, five sensors, and six microphones. This all adds up to a heavier-than-average product when compared to competing VR headsets.

Two high-resolution cameras transmit over one billion pixels per second to the displays. Each display is the equivalent of a 4K TV per eye.

The display system uses a micro-OLED backplane with pixels seven and a half microns wide, allowing for it to fit in 23 million pixels in total. A custom three-element lens was designed to magnify the screen and make it wrap around as wide as possible for the user's vision.

LiDAR and a TrueDepth camera work together to create a fused 3D map of the environment. Infrared flood illuminators work with other sensors to enhance hand tracking in low light.

There is a fan keeping the processors cool and small vent holes in the bottom of the headset.

Since Apple Vision Pro has multiple cameras, it can capture 3D content. Recorded 3D content can be viewed in the Photos app.

Optic ID, privacy, and security

For security, Apple introduces Optic ID as an iris-scanning system. Much like Face ID or Touch ID, it will be used to authenticate the user, enable Apple Pay purchases, and other security-related elements.

Apple explains that Optic ID uses invisible LED light sources to analyze the iris, which is then compared to the enrolled data stored on the Secure Enclave, which is similar to the process used for Face ID and Touch ID. The Optic ID data is fully encrypted, not provided to apps, and never leaves the device itself.

Continuing the privacy theme, the areas where the user looks on the display and eye tracking data aren't shared with Apple or websites. Instead, data from sensors and cameras are processed at a system level so that apps don't necessarily need to "see" the user or their surroundings to provide spatial experiences.

Apple VR, AR, or MR

Apple avoided calling any experience offered by Apple Vision Pro a conventional name like "Apple VR." Instead, it stuck to its own marketing terms like Spatial Computing.

There has been some contention on what to call this headset. Some refer to it as Apple VR since users put on a headset and are shown content. Others call it Apple AR with options for full immersion thanks to the Digital Crown.

It seems both may be right, as this device is a mix of both or Mixed Reality (MR). While Apple may never refer to its headset that way, it is the easiest way to explain the type of content it offers.

Think of it this way — Oculus and PlayStation VR are easily classified as VR headsets since virtual reality experiences are their primary function. For Apple Vision Pro, VR is just a feature, not the entire purpose.

During the keynote and developer sessions, Apple used in-house marketing terms to refer to each experience. It calls 2D apps "windows," 3D objects "volumes," and fully immersive VR "spaces."

visionOS

The software is run via visionOS with apps from iPhone and iPad able to operate with little to no developer intervention. Apps shown in the 3D space are still 2D objects, but some elements are shaded and rendered differently to provide more depth.

Objects created in the USDZ format can be pulled into a space, but it seems developers will need to tool unique experiences for visionOS using specific frameworks. Apps previously built with ARKit will show up as a 2D window.

Full Apple VR experiences weren't the focal point of the keynote, but they do exist. Apple plans to film multiple 360-degree videos for users to become immersed in, not to mention third-party VR apps.

The visionOS SDK was provided to developers in late June. It includes a simulator to interact with software in a testing environment, but full-on-device testing wasn't possible until hardware kits became available in July.

Public information learned from developer kits will likely be very limited. Apple has implemented strict privacy and security measures on the devices that will prevent developers from openly sharing their experiences.

The App Store for visionOS will begin showing up for beta testers later in 2023. That means developers that haven't opted out will have their iPad apps automatically show up for download to Apple Vision Pro.

Spatial Computing competition

Apple may have only just announced its Spatial Computing platform, but that doesn't mean it's the only one on the market. Competitors have been exploring AR and other extended-reality solutions for over a decade.

Google has abandoned Glass, and Microsoft has moved on from Hololens, but other options still exist. Xreal Air are among the few products attempting to provide AR today, despite the limited technology in play at the consumer level.

Samsung is expected to announce its answer to Apple Vision Pro sometime in 2023.

Using Apple Vision Pro

Apple is providing developer labs and kits to help with the pre-release development of apps for the Spatial Computing platform. This has allowed some first-hand experience beyond Apple's initial WWDC announcement to make it out to the public.

Overall, those who have interacted with the headset come away with similar ideas and feelings. This is a first-generation product that is entering a crowded space but also stands apart as a mix of AR and VR with high-end technology making things feel effortless.

Users familiar with the competitor's headsets say that Apple Vision Pro has a wider and taller field of view. Certain lighting environments, like super bright rooms or dim areas, show the limitations of the video feed, but it isn't distracting.

Eye tracking and gesture controls seem to be excellent, even in pre-release demos. These systems feel well thought out and executed and are missing some of the usual growing pains new interaction paradigms tend to have.

Dedicated apps seem to work well and blend in with the environment, giving enterprise developers an excellent option for job or maintenance training. And while Apple says iPad apps can be ported with little effort, developers will need to give some attention to their apps to ensure legibility and controls translate to the platform.

Weight wasn't an issue, though after over an hour of constant use, fatigue did start to set in. Eye strain didn't seem to be an issue, given the quality of the displays.

Apple Vision Pro release date and price

Apple tends to announce products shortly before they are released, but since Apple Vision Pro is a new platform, it gave itself plenty of space. Developers were given about a year to work on their visionOS experiences, and developer kits are available with stringent secrecy protocols via a request application.

Apple Store retail staff will begin training on the new hardware and software at the start of 2024. Managers will be sent off to gather information to present to the rest of the team.

Apple Vision Pro will launch in early 2024, which could be anytime before July. The price starts at $3,499, which could indicate some upgrade options as well as accessory pairing at checkout.

Malcolm Owen

Malcolm Owen

Mike Wuerthele

Mike Wuerthele

Wesley Hilliard

Wesley Hilliard

William Gallagher

William Gallagher